For a personal project I wanted to create a user interface inspired from old computer text modes (like DOS based software) while still feeling futuristic.

After a bit of research I decided to write my own system instead of using the native UMG/Slate UI system available in Unreal Engine 4. The main motivation being the need to simulate bloom over the interface. Bloom requires to blur the interface before combined it back into itself. I also disliked UMG editor and therefor preferred to build my own tool to better answer my needs.

This was achieved by creating my own set of shaders to draw widgets, custom render targets and setups via the Blueprint editor.

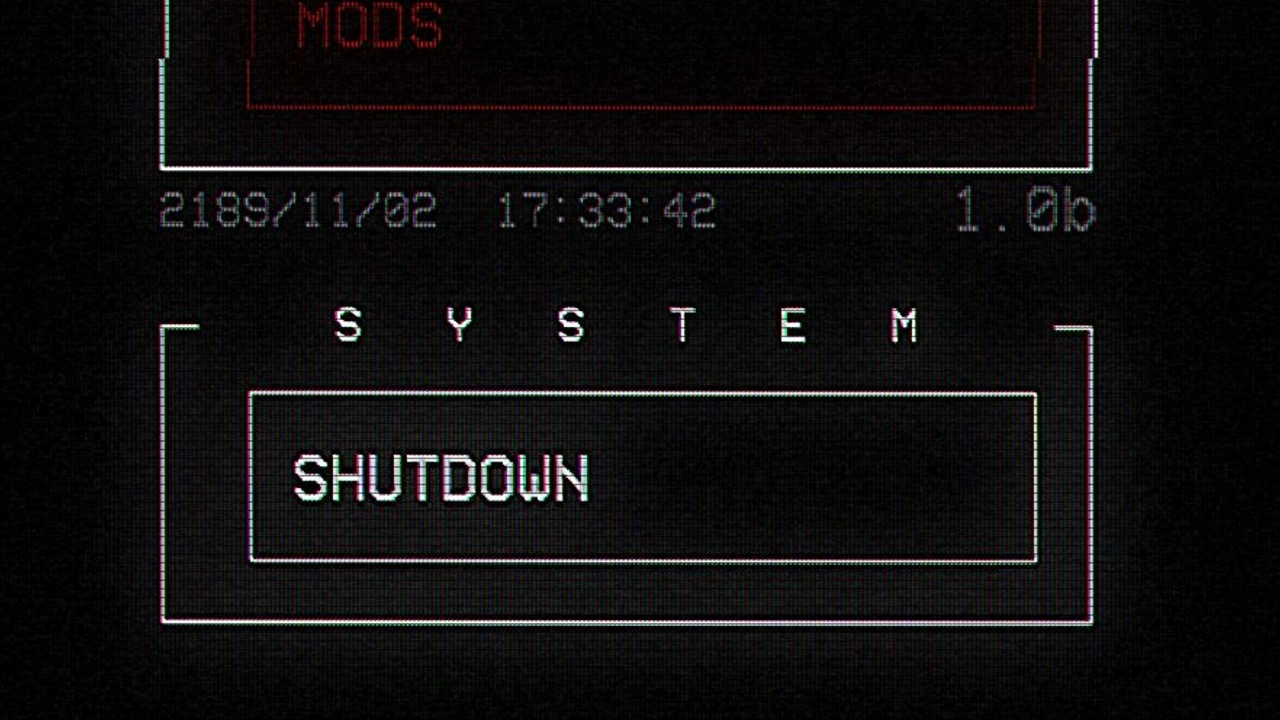

Here is the loading sequence and display of a potential main menu:

Another motivation with this UI system was to be able to list locations of a 3D environment and zoom on a 3D terrain. All the visuals were pretty much done except the dynamic zoom system:

Animations were handled via timelines within Blueprints which allowed to easily loop animations and control both shaders and sound events.

This UI system was also used to handle the player's HUD (Heads Up Display).

I also made a fade-in/fade-out transition effect when going from the game to the menu with the help of a post-process material and some camera animations.

At some point, I tried creating a UI via regular shaders placed on meshes in the scene/level. It offered some interresting vertex based animations. I event tried to combine that with some glitch and chromatic aberration post-process effects.

To display a 3D terrain in my UI I decided to give a try at raymarching, which is a method for rendering 3D shapes that has become quite popular for real-time rendering of various subjects. In my case I used this method to compute a terrain based on an elevation texture (heightmap) generated in Substance Designer.

The images below are a simple shader acting as a renderer and not an actual 3D mesh:

I also added support for shadows. The computation is actually wrong, but I liked the result anyway so I kept it as-is since it kind of faked some sort of Global Illumination. Because the system is generic, it can accept any kind of heightmap as input:

As mentioned above, one of the main goal of this custom UI system was to generate some bloom over it. Initially I used a regular box blur (two pass) but results weren't satisfying enough.

I went another route and ended up using a distance field generated from the UI alpha channel. The distance allowed to expand the widget and text shapes that I then blurred with multiple circular blurs. Combining these two methods helped preserve the details and made the bloom wider and more noticeable. I wrote about the distance generation in this article: Realtime Distance Field Textures in Unreal Engine 4.

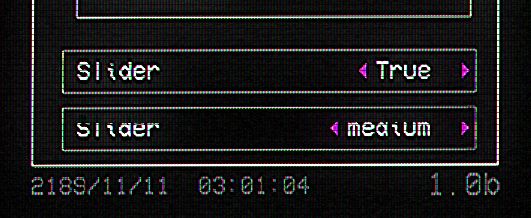

In order to draw a custom UI I needed to create a widget system. The visual of the widget was handled via custom shader which had a single texture as input. The shader would split the texture into 9 blocks, allowing to resize the texture and widget at will:

Because I was using a custom system, I handled the widget size and placement via simple wireframe boxes in the Blueprint. While running the game at the same time it allowed to preview the result live.

Once this was in place, I draw a few patterns on paper to get some ideas of which type of widgets and layout I wanted. I then used Substance Designer to build these patterns and feed them to the shader:

Here are some examples of the type of input texture I used to draw the widgets borders: